LiveSubtitles

A real-time captioning tool that burns live subtitles into video streams so deaf and hard-of-hearing audiences can follow public talks, conferences, and live events — frame-accurate, operator-driven, RTMP-ready. Deployed by one of the world's largest organisations across multiple countries.

Overview

I built LiveSubtitles to solve a problem I kept seeing at events: deaf and hard-of-hearing attendees either got a printout hours later or nothing at all. The tool gives an operator a text editor where they navigate prepared subtitle lines — and the moment they move the cursor, that text is composited pixel-by-pixel onto every outgoing video frame. The captioned stream then goes live over RTMP to YouTube, Twitch, or any endpoint the venue already uses. No separate caption service. No CDN subscription. One laptop, one operator.

The pipeline I engineered runs as: VideoReader → VideoSubtitleFilter → AudioInputFilter → VideoStreamer. JavaCV and FFmpeg do the heavy lifting — H.264 encoding at configurable bitrate and preset, AAC audio at 120 kbit/s, and a HLS playlist proxy so audience devices can pull the stream directly from the operator machine when there is no external CDN. An SRT session logger timestamps every subtitle change and exports a full .srt file at the end of each session, giving organisations a permanent accessibility record.

The overlay variant was the first build — a stripped-down NetBeans project made for one of the world’s largest organisations, now deployed across multiple countries worldwide. It ships an always-on-top projector-spanning overlay window, per-line character limits, and a custom prepared-script file format designed for large-auditorium events where operators follow pre-vetted text. The lessons from that global deployment drove the 1.0 – 1.2 rewrite into a cleaner Maven project with multi-tab editing, drag-and-drop subtitle tabs, push-to-talk support, and fully configurable subtitle appearance.

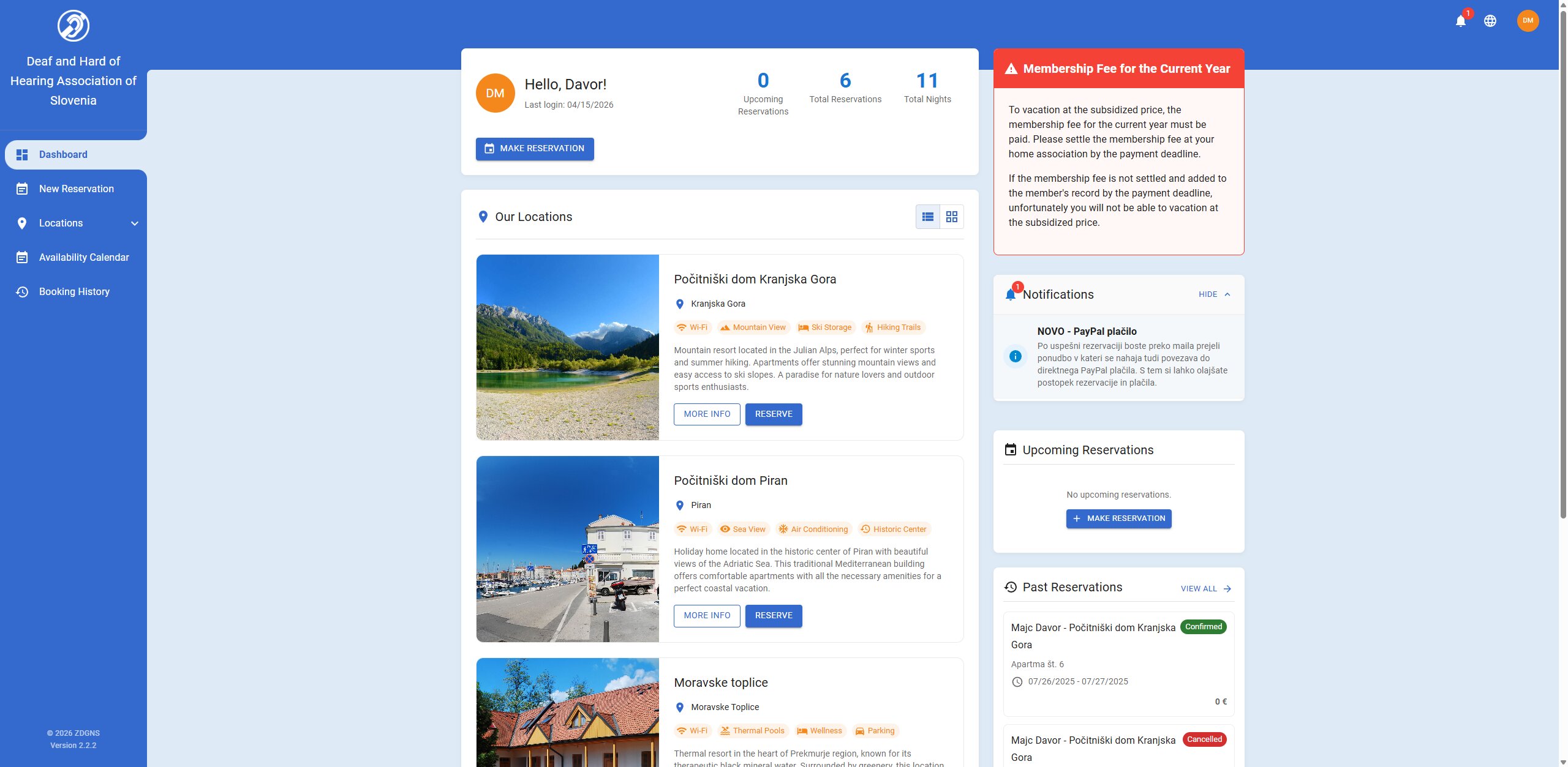

Architecture

Reading the diagram: Four operator inputs — video source (file, HLS, or YouTube URL), microphone line, prepared subtitle document, and optional HLS playlist — all feed into a single FFmpeg-backed frame pipeline. The pipeline composites caption text onto each video frame before it reaches any output. Outputs fan out: RTMP push for live streaming, an SRT session log for archiving, optional local recording, and — in the enterprise overlay variant — an always-on-top overlay window mapped to the projector screen. Every component is swappable through the settings panel without touching the pipeline.

Accessibility work doesn't make the highlight reel, but it's the code that matters most. Every frame this pipeline got right was a sentence a deaf audience member could follow.

Six things shipped,

three hard ones solved.

Key contributions

- Built a frame-accurate subtitle burn-in pipeline:

VideoReader → VideoSubtitleFilter → AudioInputFilter → VideoStreamer, compositing operator-selected caption text onto H.264 video at 30 fps before RTMP push. - Engineered the RTMP streaming layer using JavaCV and FFmpeg — configurable bitrate, H.264 preset/tune, AAC audio — targeting YouTube Live, Twitch, and any RTMP endpoint.

- Implemented an HLS download engine with a local HTTP proxy so audience screens could pull the captioned stream from any CDN without software installs.

- Wrote a multi-format subtitle parser covering HTML, XML, .docx/.doc (Apache POI), SubRip (.srt), and a custom binary format for the enterprise overlay variant.

- Built the SRT session logger that timestamps every subtitle transition and exports a complete .srt file at session end — providing a permanent accessibility record.

- Shipped the standalone overlay variant adopted by one of the world's largest organisations — always-on-top projector overlay, transparency and font controls, character-limit enforcement, and a custom prepared-script file format — deployed across multiple countries worldwide.

Challenges solved

- Frame-pipeline back-pressure — the subtitle filter had to composite text onto raw video buffers at frame rate without accumulating a memory leak from lingering JavaCV Frame objects; solved with a fixed-capacity CircularFifoQueue and async subtitle image caching.

- Audio/video drift over long sessions — early HLS playback had audio drifting away from the subtitle cursor; required a custom PausableRelativeClock and a 2.5-second adaptive buffer tuned through multiple release cycles (v1.0.5 → v1.1.1).

- Zero infrastructure for the audience — the tool had to work in large auditoriums and community venues with no server team; the local HLS HTTP proxy meant an operator laptop was the entire broadcast chain.

What's under the hood.

Ready to fix, build,

or scale?

30 minutes, with me personally. I'll read your system like a log file and tell you what I'd do first. No pitch deck, no sales funnel.

— Davor Majc, founder, Numen